TL;DR: There are five levels of AI automation, and most people stop at level two or three. Level four hits you with a cognitive load wall that makes you question the whole thing. Push through it by externalising your skills, processes, and decision points into automations, and you reach the Dark Factory: a software factory where ideas are the only limiting factor.

The Dark Factory: Building the Software Factory That Runs Without You

I read something recently that stopped me in my tracks.

Saumya Tyagi wrote a piece on HackerNoon called “The Dark Factory Pattern.” In manufacturing, a dark factory is one that operates with the lights off. No humans on the floor. Robots building cars, 24 hours a day, in the dark.

Tyagi applied the concept to software: a pipeline where no human writes code, no human reviews code, and no human manually tests code. Humans write specs and acceptance criteria. That’s it.

When I read that, I didn’t think “that’s interesting.” I thought: “that’s what I’ve been building.”

This post is a continuation of my AI journey. Last time I wrote about how pipelines are the product. This is what comes next. This is what I’ve been working on, and where I think the real frontier is.

The Five Levels (and Where Most People Get Stuck)

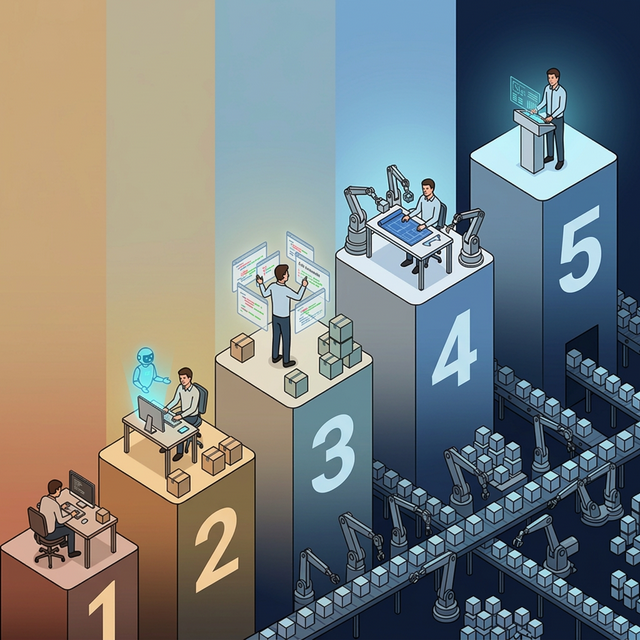

Dan Shapiro wrote about the five levels of AI automation, borrowing from the self-driving car classification. It’s worth reading the whole thing, but here’s the short version:

- Level 1: AI completes your sentences. You do everything else.

- Level 2: AI is a junior colleague. You get a productivity boost, but the process stays the same.

- Level 3: AI becomes the primary coder. You shift to reviewing.

- Level 4: You’re not coding. You’re not reviewing. You’re managing autonomous execution.

- Level 5: The Dark Factory. Specs in, tested code out. Lights off.

Shapiro makes an observation that stuck with me: every level feels like you’re done. Level two feels incredible. You’re in flow. The code is flying. You feel like you’ve reached the ceiling.

You haven’t.

December: The Loop That Changed Everything

In December 2025, I was working with something I called the Ralph Wiggum loop. Simple concept: a while-do loop that rotated code through iterations until a condition was met.

Picture a line of a hundred people doing a task. The first person gets it partially right. The next person picks up where they left off, checks the spec, and carries it forward. Then the next. And the next. Each one building on the last, correcting, refining, until the specification is met.

Simplistic? Yes. But simplicity sometimes is power.

That loop taught me something fundamental: there’s an infinite amount of resources here. What if the agent gets it wrong the first time? There’s another loop coming to pick it up and carry on. Self-correcting, self-improving, relentless.

Everything is a loop. All agents are loops. How you use those loops, where they sit, how they work together: that’s the key.

The Proof Point: Nobody Was Actually Using Agents

Then in January, OpenClaw dropped. It gave the general public access to agentic workflows: file access, computer use, cron-based automations. And the internet lost its mind.

I watched the reaction with a specific thought: those who knew how agents worked saw that there was nothing special. Fair enough, the capability was more than sufficient to give people a taste of agentic workflows. But the people going crazy about it? They shouldn’t have been that surprised. Not if they’d actually been using agents.

The hype reaction proved something: most people talking about AI agents weren’t actually using them. They were watching others use them. Talking about what agents could do, without giving agents access to their own systems.

That gap between people watching and people doing? It’s where most of the AI conversation lives.

I don’t say this to be dismissive. Anyone working in AI and learning it has my respect. But the reaction to OpenClaw was a signal. It told me where the industry actually was versus where it claimed to be.

The Cognitive Load Wall

So let’s talk about what happens at level four. Because this is where people break.

At level four you’re an orchestrator. A programme manager. A project manager. A Scrum master. All at once.

You have to know everything that’s going on. You have to understand the architecture, document it, hold it all in your head. You have to understand every workflow, every task, every phase. You have to make sure all of it fits into agent memory, because agents are like goldfish. They forget. So you document obsessively, knowing that if you don’t, the next agent session starts from zero.

Multiple streams. Multiple contexts. Constant switching. The cognitive load is extreme.

And this is where people look at it and say: “Why are we even bothering? It’s just one person doing the job of ten, and all this tool does is disguise the fact that people are going to get paid less for more.”

I get why people think that. At level four, it genuinely feels that way. You’re doing more work, not less. The agents multiply your output, but they also multiply the management overhead. You’re carrying every context, every plan, every decision in your head. One person’s brain trying to keep up with ten agents’ worth of execution.

Most people won’t get past this point. They’ll look at the cognitive load and decide it’s not worth the effort.

Breaking Through: Externalise Everything

Here’s the thing about the cognitive load wall: you’re not supposed to carry it all in your head. That’s the whole point.

To get from level four to level five, you have to offload. Not just some of it. All of it.

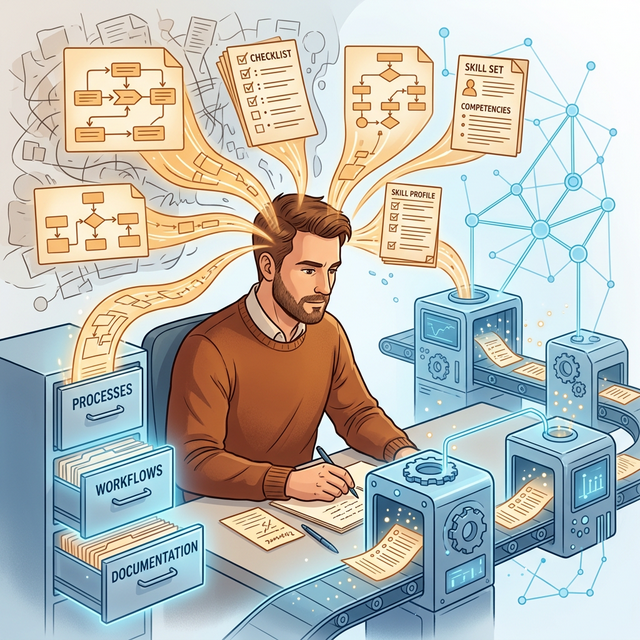

Every skill you have internalised, you disassemble and put on paper. Every process you navigate instinctively, you document as a workflow an agent can follow. Every quality check you do by feel, you codify into a validation step. Every decision point that lives in your gut, you turn into a rule.

This is harder than it sounds. Because you don’t actually disassemble the skills in your head naturally. You just know how to do them. As people. As humans. Turning tacit knowledge into explicit instructions that an agent can traverse? That’s a skill most people don’t have and don’t want to develop.

But once you fill those gaps, once you create the skills and the automations and the processes, everything starts freeing up. The cognitive load drops. The factory starts running.

What the Dark Factory Actually Is

So what is it? Not the manufacturing metaphor. The actual thing I’m building.

Skills. Gaps. Processes. Decision points. All connected so that an agent can logically traverse them and get a desired outcome.

If the outcome isn’t desired? It loops back. Over time, more agents join, more learning accumulates, and the system self-improves.

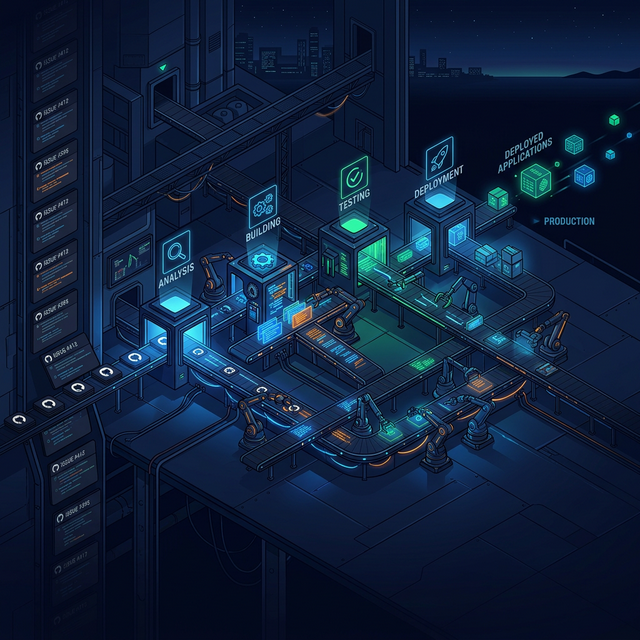

End to end: a GitHub issue comes in. The system reads the spec. An agent writes the code. Another agent validates it. Tests run. Quality checks pass. Code ships. If something fails, it loops back and tries again.

The human’s role? Write the spec. Define what “done” looks like. Then let the factory run.

Tyagi’s article describes this with specific engineering rigour: holdout scenarios the coding agent never sees, isolated LLM evaluators, ephemeral environments, phased rollout from human-reviewed to auto-merged. The architecture is sound. The separation between code generation and validation (where the coding agent can never see the test scenarios) is the key insight.

But the principles are simpler than the implementation: loops all the way down, gaps filled by skills, processes an agent can walk.

The Inversion Nobody Expects

Here’s the part that surprises people.

We’ve all been led to believe that ideas are a dime a dozen. “Execution is everything,” they say. “Ideas are cheap. Building is what matters.”

Once you unlock the Dark Factory, that flips completely.

When you can output capability at this speed, when the factory can take a spec and produce working software without you writing a line of code, what becomes scarce? Not execution. Not building. Not coding.

Ideas. Creativity. Knowing what to build.

Your ideas are the limiting factor. Because you can output so much capability in such a short amount of time. The only things that will limit you are compute, your capability, and your creativity.

This is a secret that people don’t know and they’re not willing to know. They won’t get to know, because they’ll never get to this point.

Why Most People Won’t Get Here

AI is all fun and everything until it’s not. You have to push through barriers. Those barriers are hard. Let’s not beat around the bush: AI is not easy.

There’s a complete misconception that AI is easy. Just like any other skill, AI needs time to master. A thousand hours to become an expert. Ten thousand hours to become a master. That’s a long time.

And the more you learn, the less you know. You can see the people who are just getting into the game, overconfident, thinking they know more than they do. No matter what you tell them, they don’t believe you. Then when it starts getting harder, they leave. It’s just too hard. They’ll wait for something easier.

“Why don’t you just wait for the big labs to do this?”

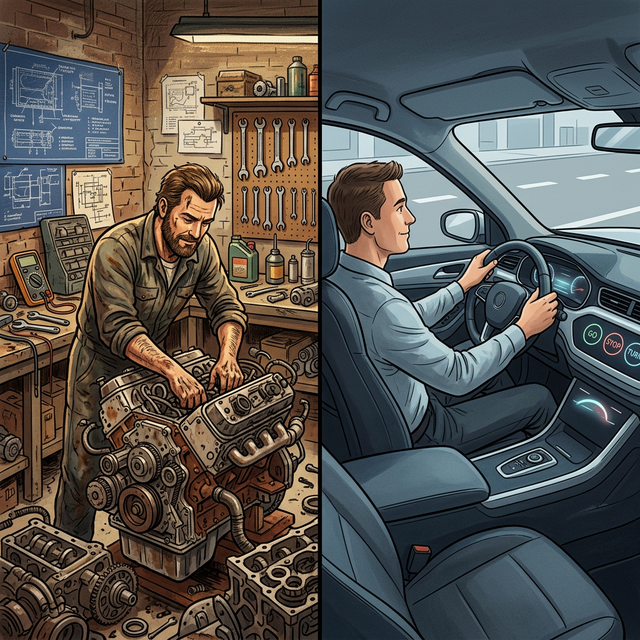

It’s like being a mechanic who knows how to build engines. If you know how the engine works, when you step into a brand new car, you know everything about that car. You know how to upgrade it. You know what to do to make things better. If there’s a problem, you know where to look.

Versus people who just drive the car. It goes, it stops, it turns. That’s the extent of their relationship with it.

The people who build dark factories are going to understand everything about how it all works. They’re the mechanics. Everyone else is a driver.

The Coming-Together Moment

People ask me why I’ve been able to do this. I think it’s just the love of automation. That’s what I’ve done my whole life. Why should we do things for the hell of it if we can automate it?

I’ve always been able to pull out processes and procedures and put them together into a repeatable process. But before AI, the documentation and cognitive load required to do this at scale was impossible. The tooling wasn’t there. The leverage wasn’t there.

This is a coming-together moment. Everything I’ve learned over 25 years about automation, processes, documentation discipline, all of it now has a platform that can actually use it.

Over time you put together capabilities and knowledge. Sometimes they amass to nothing. But as you learn more, you can revisit them, and they add to a bigger picture. This is the amalgamation of tens of thousands of hours of work. When you think you know the stuff, you don’t. And some things you learned years ago, you’re reusing now.

Things aren’t hard when you’re enjoying yourself and learning. My escape is learning. I was never up against a barrier when the only answer is more learning.

What This Means For You

If you’re at level two or three right now, using Copilot, maybe experimenting with agentic coding, here’s what I want you to know:

It all becomes easier. You need to put in the hard work to make it easy. It’s just like learning a process, a computer game, muscle memory. Push through the part where it stops being fun and starts being frustrating, because the other side of that wall is where everything opens up.

Start small. Pick one thing you do repeatedly and try to externalise it. Write it down as a process. Turn it into a skill an agent can follow. See what happens.

Then pick another thing. And another.

You’ll find that each thing you externalise frees up space in your head for the next one. The weight lifts. The factory starts assembling itself.

Or you can wait. Wait for the big labs to build it for you. Wait for someone else to figure it out.

But the people who build their own dark factories will understand everything about how it works. And when the next new car rolls off the line, they’ll already know the engine.

Reflection Questions

I’m curious about your experience:

- Where do you sit on the five levels? Be honest with yourself.

- What cognitive load are you carrying that could be externalised?

- Have you tried writing down a process you do instinctively? How did it go?

- What would you build first if execution was no longer the bottleneck?

Let’s discuss. I want to hear where you are in this journey.

Published: March 9, 2026 Author: Nick (vanzan01) Location: Perth, Australia